66 Years Ago, the U.S. Navy Predicted Humanlike Machines Based On This Technology

The field of artificial intelligence has been running through a boom-and-bust cycle since its early days.

A room-size computer equipped with a new type of circuitry, the Perceptron, was introduced to the world in 1958 in a brief news story buried deep in The New York Times. The story cited the U.S. Navy as saying that the Perceptron would lead to machines that “will be able to walk, talk, see, write, reproduce itself and be conscious of its existence.”

More than six decades later, similar claims are being made about current artificial intelligence. So, what’s changed in the intervening years? In some ways, not much.

The field of artificial intelligence has been running through a boom-and-bust cycle since its early days. Now, as the field is in yet another boom, many proponents of the technology seem to have forgotten the failures of the past – and the reasons for them. While optimism drives progress, it’s worth paying attention to the history.

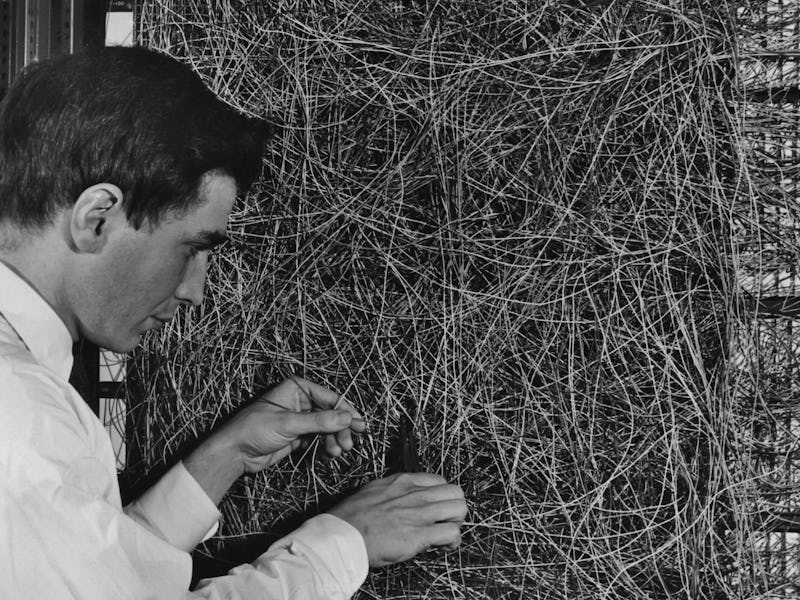

The Perceptron, invented by Frank Rosenblatt, arguably laid the foundations for AI. The electronic analog computer was a learning machine designed to predict whether an image belonged in one of two categories. This revolutionary machine was filled with wires that physically connected different components together. Modern-day artificial neural networks that underpin familiar AI like ChatGPT and DALL-E are software versions of the Perceptron, except with substantially more layers, nodes, and connections.

Much like modern-day machine learning, if the Perceptron returned the wrong answer, it would alter its connections to make a better prediction of what comes next the next time around. Familiar modern AI systems work in much the same way. Using a prediction-based format, large language models, or LLMs, can produce impressive long-form text-based responses and associate images with text to produce new images based on prompts. These systems get better and better as they interact more with users.

AI boom and bust

In the decade or so after Rosenblatt unveiled the Mark I Perceptron, experts like Marvin Minsky claimed that the world would “have a machine with the general intelligence of an average human being” by the mid-to late-1970s. But despite some success, humanlike intelligence was nowhere to be found.

It quickly became apparent that the AI systems knew nothing about their subject matter. Without the appropriate background and contextual knowledge, it’s nearly impossible to accurately resolve ambiguities present in everyday language – a task humans perform effortlessly. The first AI “winter,” or period of disillusionment, hit in 1974 following the perceived failure of the Perceptron.

However, by 1980, AI was back in business, and the first official AI boom was in full swing. There were new expert systems, AIs designed to solve problems in specific areas of knowledge, that could identify objects and diagnose diseases from observable data. There were programs that could make complex inferences from simple stories, the first driverless car ready to hit the road, and robots that could read and play music playing for live audiences.

But it wasn’t long before the same problems stifled excitement once again. In 1987, the second AI winter hit. Expert systems were failing because they couldn’t handle novel information.

The 1990s changed the way experts approached problems in AI. Although the eventual thaw of the second winter didn’t lead to an official boom, AI underwent substantial changes. Researchers were tackling the problem of knowledge acquisition with data-driven approaches to machine learning that changed how AI acquired knowledge.

This time also marked a return to the neural-network-style Perceptron, but this version was far more complex, dynamic, and, most importantly, digital. The return to the neural network, along with the invention of the web browser and an increase in computing power, made it easier to collect images, mine for data, and distribute datasets for machine learning tasks.

Familiar refrains

Fast forward to today, and confidence in AI progress has begun once again to echo promises made nearly 60 years ago. The term “artificial general intelligence” is used to describe the activities of LLMs like those powering AI chatbots like ChatGPT. Artificial general intelligence, or AGI, describes a machine that has intelligence equal to humans, meaning the machine would be self-aware, able to solve problems, learn, plan for the future, and possibly be conscious.

Just as Rosenblatt thought his Perceptron was a foundation for a conscious, humanlike machine, so do some contemporary AI theorists about today’s artificial neural networks. In 2023, Microsoft published a paper saying that “GPT-4’s performance is strikingly close to human-level performance.”

But before claiming that LLMs are exhibiting human-level intelligence, it might help to reflect on the cyclical nature of AI progress. Many of the same problems that haunted earlier iterations of AI are still present today. The difference is how those problems manifest.

For example, the knowledge problem persists to this day. ChatGPT continually struggles to respond to idioms, metaphors, rhetorical questions, and sarcasm – unique forms of language that go beyond grammatical connections and instead require inferring the meaning of the words based on context.

Artificial neural networks can, with impressive accuracy, pick out objects in complex scenes. But give an AI a picture of a school bus lying on its side, and it will very confidently say it’s a snowplow 97 percent of the time.

Lessons to heed

In fact, it turns out that AI is quite easy to fool in ways that humans would immediately identify. I think it’s a consideration worth taking seriously in light of how things have gone in the past.

The AI of today looks quite different than AI once did, but the problems of the past remain. As the saying goes, History may not repeat itself, but it often rhymes.

This article was originally published on The Conversation by Danielle Williams at Washington University in St. Louis. Read the original article here.